About

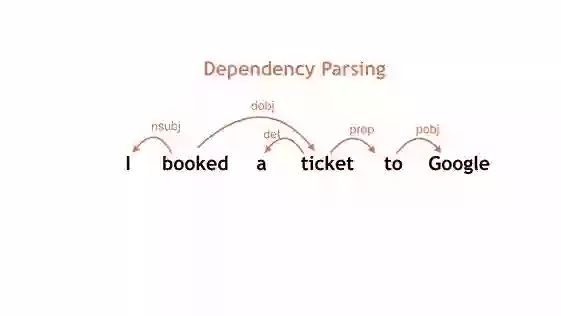

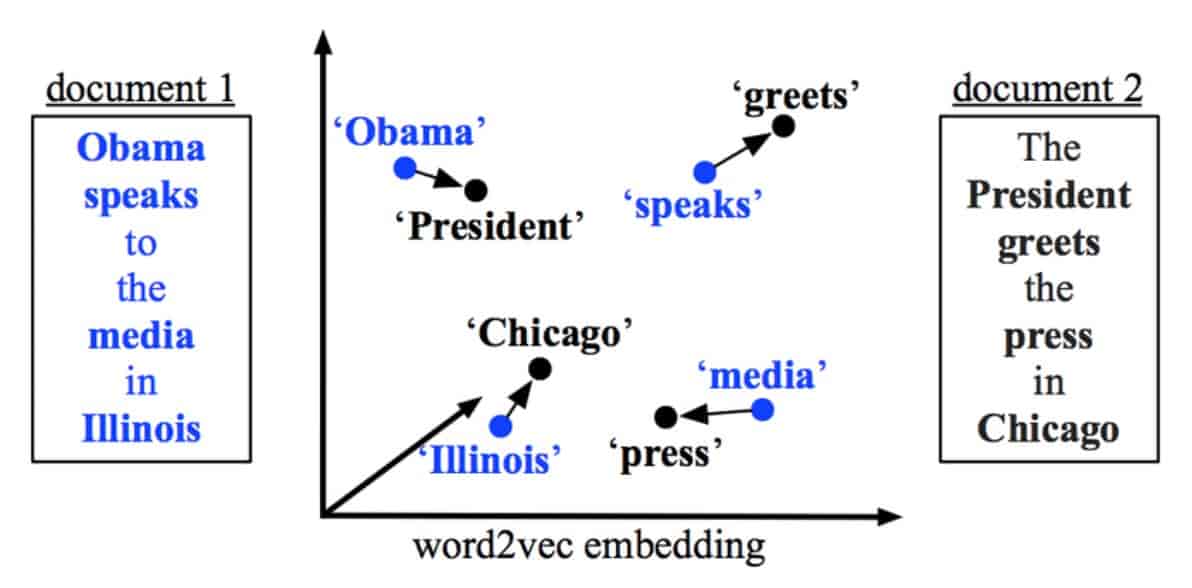

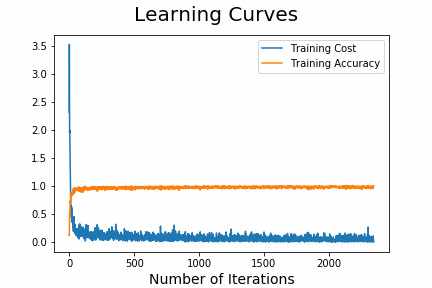

Hi, I am pursuing Master's in Electrical Engineering (specialization in Robotics and Computer Vision) at the University of California, Riverside starting in Fall'22. In addition to my studies, I also work as a Graduate Student Researcher at the Visual Computing Group of UCR with a focus on 3D Computer Vision, Generative AI and Temporally Continuous Pose Estimation for Videos. Previously, I have worked as a Project Assistant at Visual Computing Lab, IISc Bangalore under the supervision of Dr. Anirban Chakraborty. My primary research interest lies in the intersection of Deep Learning and Computer Vision. I focus on leveraging Unsupervised and Semi-supervised Learning techniques for domain adaptation. I have exposure to other fields like Natural Language Processing, Electronics, Communication systems, CAD, Graphic Designing, Video Editing, etc

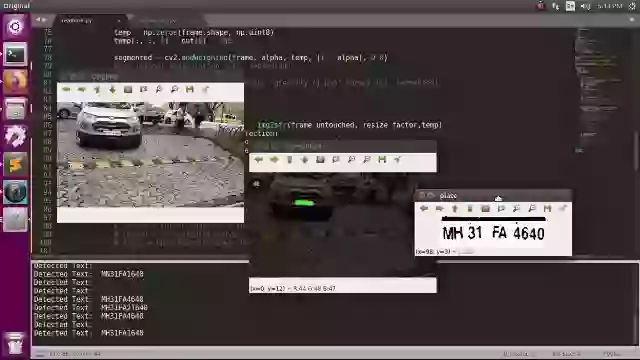

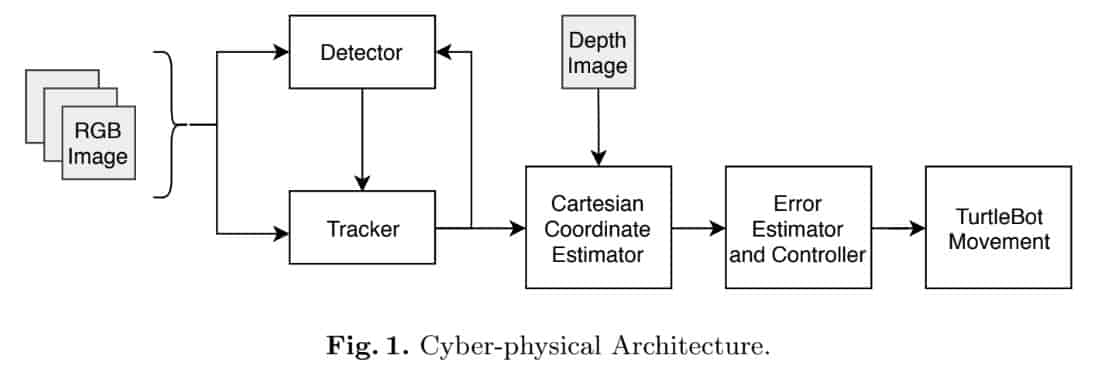

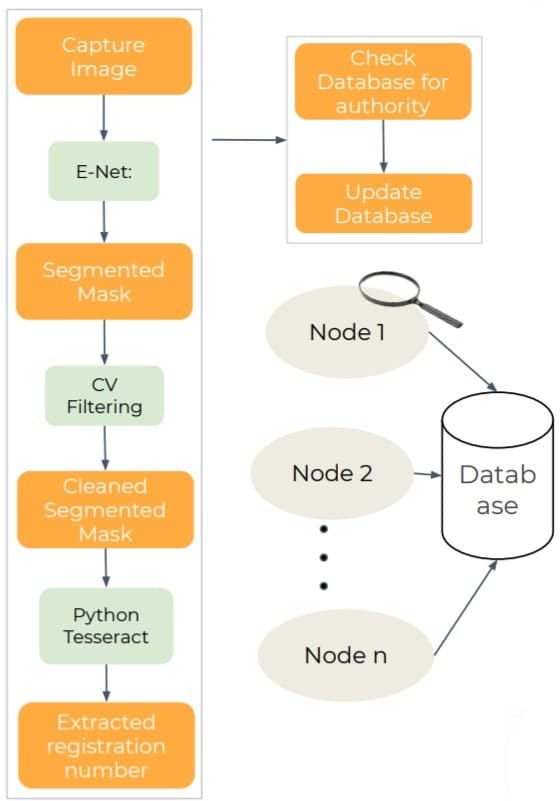

As an undergrad student, I have done my research intern under Prof. Hongliang Ren, NUS. I have also worked with a senior scientist at DRDO on a drone image based object detection and tracking project.

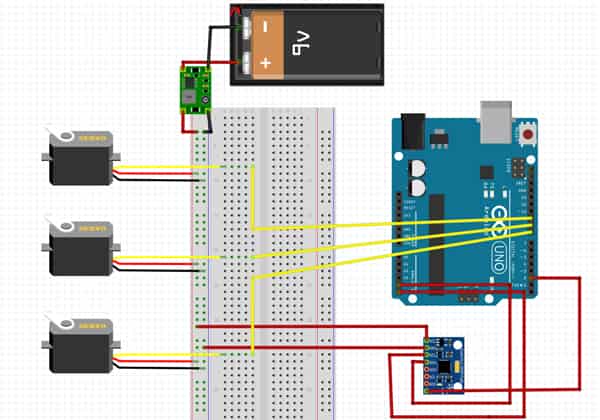

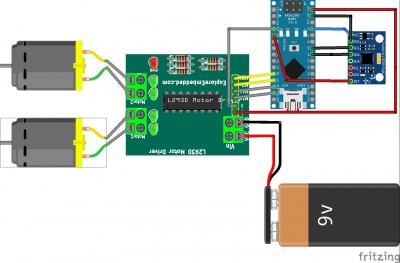

I completed my undergraduate degree from Visvesvaraya National Institute of Technology (VNIT), Nagpur, India (Batch of 2021). During my bachelor's, I have incessantly worked at IvLabs, the AI and Robotics Lab under Dr. Shital Chiddarwar. Being a core coordinator of the lab, I have mentored motivated juniors and conducted workshops. I am open to collaborations and looking forward to connecting with people working in similar domains!